added to the piLagTester: test images generator to check for scaling and cliping

Of significant interest to retro gaming enthusiasts is how the display upscales low resolution input (such as 640p) to the native resolution of the display. Somewhat shockingly I've even found that some panels will scale/clip native resolution input too!!

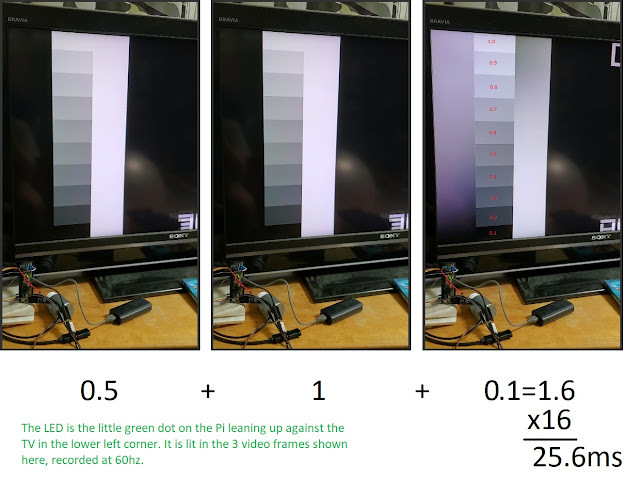

To that end I've written some simple test image generating programs for my piLagTester program (and upcoming piLagTesterPro). Obviously this has little to do with display lag, but it's a sensible place to stash them given that they also run on a Raspberry pi (chosen for maximal physical portability) and relate to display characterization.

The first is lines, which just draws a set of 45 degree lines on the screen, starting at the upper left corner. This gives an easy way to see if the upscaling is picture perfect or not.

Here are two examples of 45 degree lines that aren't clean (none of this is camera artifacts).

The nice thing is this is generated pixel perfect on demand for the current resolution, so you can do tv480i; lines at the command prompt to test that resolution, or tv1080p; lines, etc. (the semicolon allows two commands on one line, you could substitute for ;).

The other test generator is called dots. By default it produces a checkerboard where each check is 1 pixel. On most modern screens this is going to look gray. The question is, does it look uniform gray, or does the gray vary vertically or horizontally, indicating sampling issues in the upscaler. I literally can't take a photo of this, because it's a camera's worst nightmare.

The program has a bonus option that lets you set the check size which can be handy, sometimes the upscaling issues only appear at 2 or 3 pixel sized checks. And if you use a check-size of 20 or more, it doubles as a clipping tester, which I can show you, though it too is hard to photograph. Below is an example with 20 pixel wide checks:

In this photo you can see that first check that's fully visible starts 40 pixels from the left edge. Checks are spaced every 20 pixels, so that means that about (can you guess?)........... 20 pixels are cut off here. Note: the same resolution changing options work here as with lines (ie tv720p;check 20)

If you want to give them a try on your Raspberry Pi download them here.

To that end I've written some simple test image generating programs for my piLagTester program (and upcoming piLagTesterPro). Obviously this has little to do with display lag, but it's a sensible place to stash them given that they also run on a Raspberry pi (chosen for maximal physical portability) and relate to display characterization.

The first is lines, which just draws a set of 45 degree lines on the screen, starting at the upper left corner. This gives an easy way to see if the upscaling is picture perfect or not.

Here are two examples of 45 degree lines that aren't clean (none of this is camera artifacts).

The nice thing is this is generated pixel perfect on demand for the current resolution, so you can do tv480i; lines at the command prompt to test that resolution, or tv1080p; lines, etc. (the semicolon allows two commands on one line, you could substitute

The other test generator is called dots. By default it produces a checkerboard where each check is 1 pixel. On most modern screens this is going to look gray. The question is, does it look uniform gray, or does the gray vary vertically or horizontally, indicating sampling issues in the upscaler. I literally can't take a photo of this, because it's a camera's worst nightmare.

The program has a bonus option that lets you set the check size which can be handy, sometimes the upscaling issues only appear at 2 or 3 pixel sized checks. And if you use a check-size of 20 or more, it doubles as a clipping tester, which I can show you, though it too is hard to photograph. Below is an example with 20 pixel wide checks:

In this photo you can see that first check that's fully visible starts 40 pixels from the left edge. Checks are spaced every 20 pixels, so that means that about (can you guess?)........... 20 pixels are cut off here. Note: the same resolution changing options work here as with lines (ie tv720p;check 20)

If you want to give them a try on your Raspberry Pi download them here.